The human experience is defined by a series of fleeting moments that we desperately try to capture through the lens of a camera. However, static photography often leaves a void where the true essence of a memory should be, failing to replicate the subtle rustle of leaves or the gentle movement of a smile. This limitation often results in a sense of emotional detachment from our own history, as frozen frames can feel cold and distant compared to the vivid reality we remember. To overcome this sensory gap, many are turning to Image to Video AI to infuse their archives with life, creating a bridge between the stillness of the past and the dynamic energy of the present.

As we look deeper into the psychological impact of visual media, it becomes clear that our brains are naturally wired to respond more intensely to motion. A static image requires the viewer to perform the cognitive labor of imagining movement, which can sometimes diminish the immersive quality of the memory. By contrast, a moving sequence allows the observer to sink into the narrative, experiencing the scene as a living entity rather than a historical artifact. This shift in perspective is fundamental to how we maintain our emotional heritage in a digital-first world where attention is scarce and engagement is everything.

The democratization of these sophisticated animation tools means that professional-grade storytelling is no longer the exclusive domain of high-budget film studios. By utilizing a streamlined Photo to Video workflow, even a novice creator can produce cinematic results that resonate with authenticity and depth. This technical accessibility is transforming the way we document our lives, allowing us to see our ancestors and our adventures through a lens of fluid motion. In my observation, the ability to synthesize time within a single file is the most significant advancement in personal media since the invention of digital photography itself.

While the potential for creative expression is vast, the technology is grounded in complex neural networks that predict how light and geometry should evolve over a five-second window. These models analyze the structural data of a 2D image to create a plausible 3D environment, ensuring that the resulting motion feels grounded in physical reality. Although the results are often stunning, it is important to remember that the AI acts as a collaborator, requiring a clear vision and a high-quality source image to achieve the best possible outcome.

Table of Contents

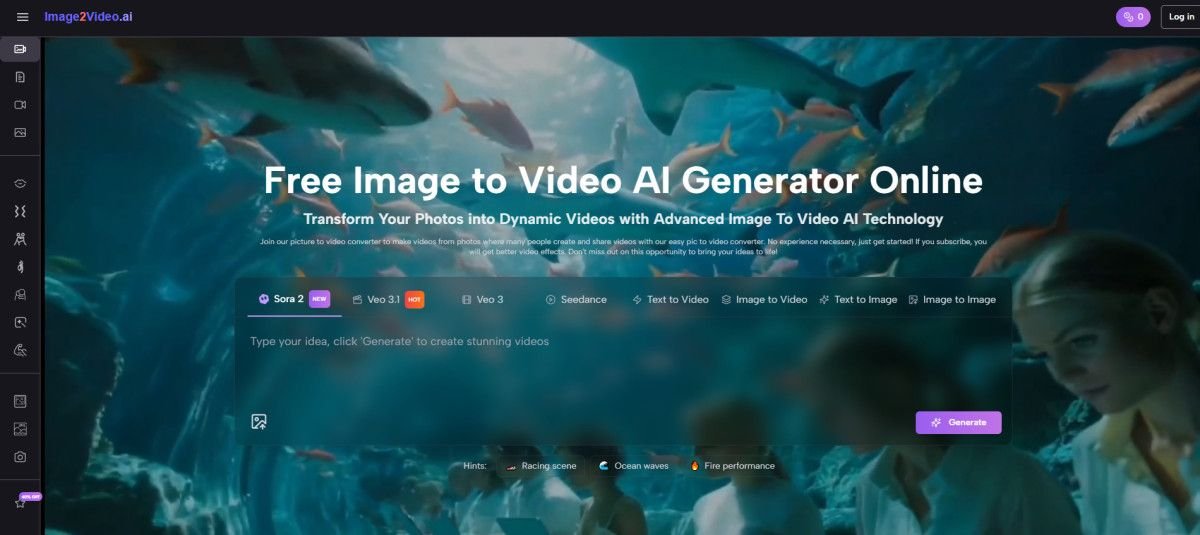

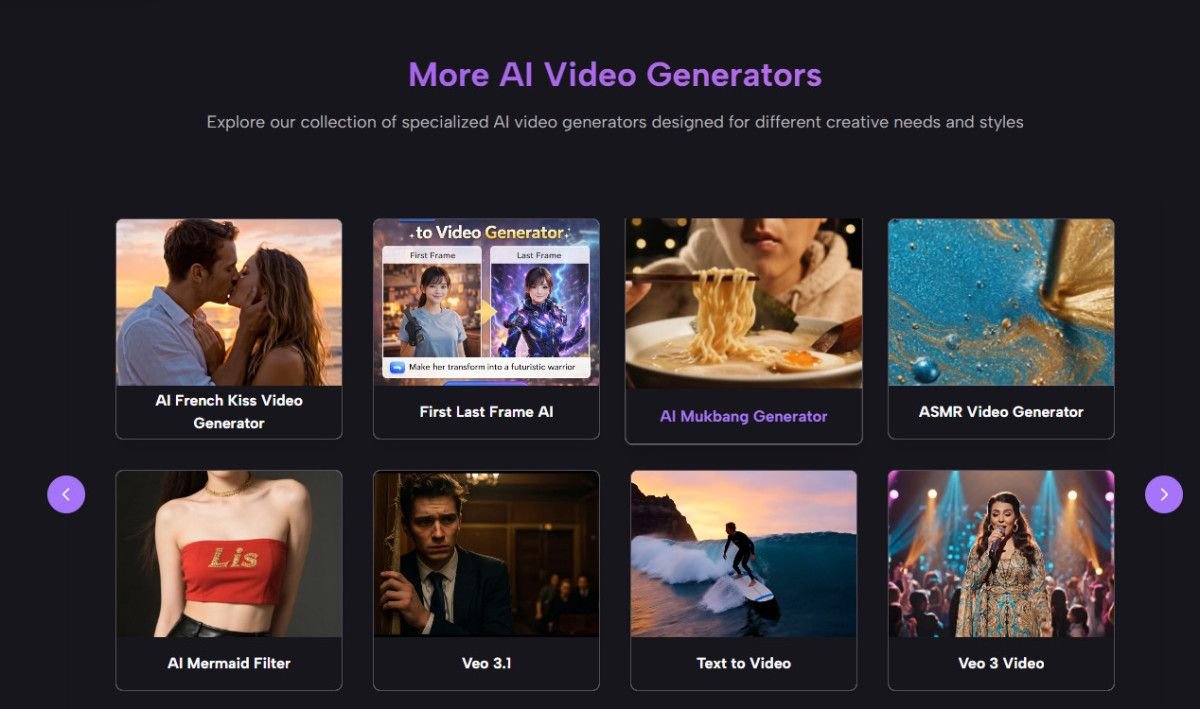

ToggleA Curated Directory Of Leading Platforms For Image Animation

The following list identifies the primary websites currently defining the industry standard for converting static images into high-quality video content. These platforms offer a range of capabilities suitable for both personal and professional use cases.

- image2video.ai

- Runway Gen-2

- Pika Labs

- Luma Dream Machine

- Kling AI

- Sora by OpenAI

- Leonardo AI

- Kaiber AI

- HeyGen

- Stable Video Diffusion

Systematic Operational Procedures For Creating High Fidelity Video Clips

The process of generating motion from a static source is designed to be intuitive and efficient. By following the official protocol, users can ensure their creative vision is accurately translated by the AI engine.

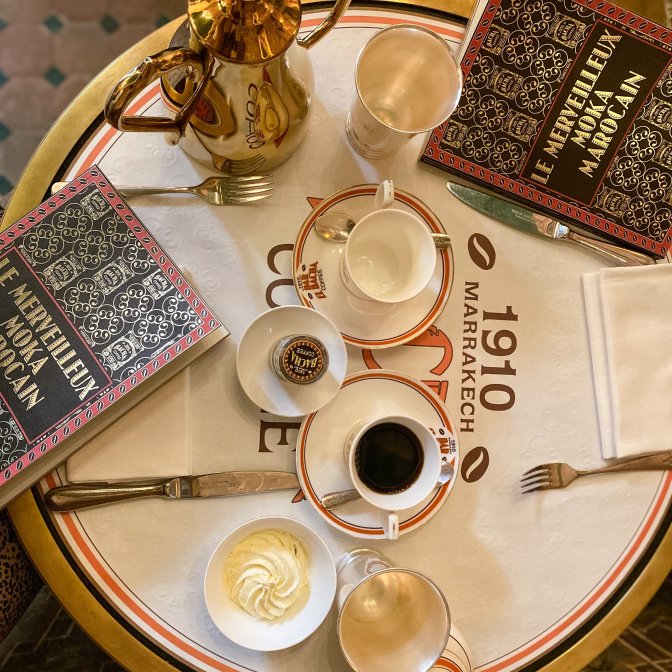

The Initial Phase Of Asset Preparation And Data Upload

The first step requires the selection of a high-resolution image in either JPEG or PNG format. This file serves as the foundation for the entire generation process, and its clarity directly impacts the stability of the final video. Once the image is selected, it is uploaded to the secure web interface.

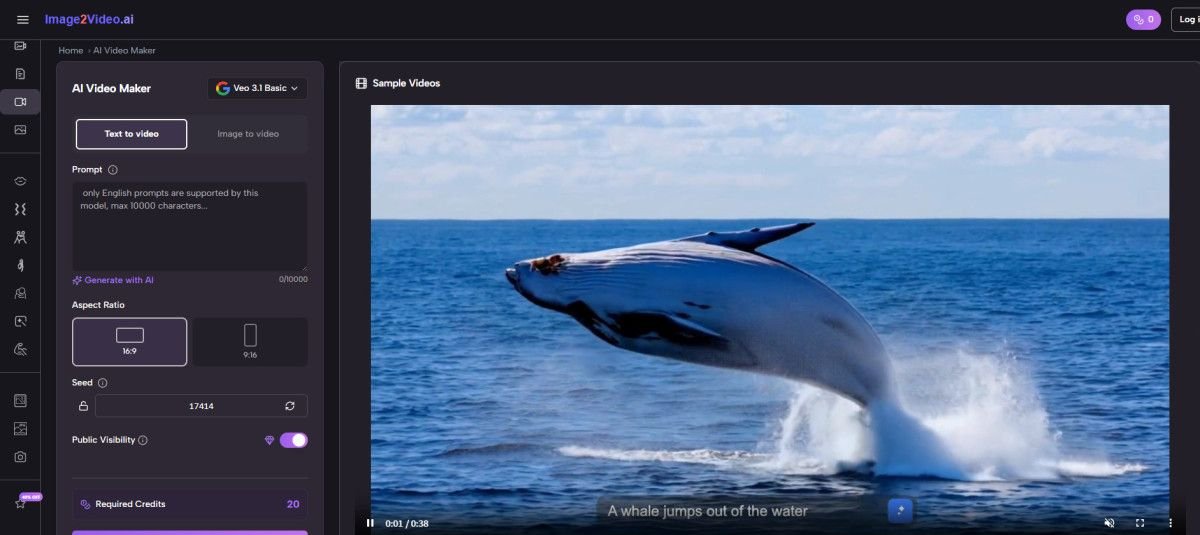

Defining Motion Vectors Through Strategic Natural Language Prompting

After the image is staged, the user provides a text description of the desired movement. This prompt acts as the director’s script, instructing the AI on how to handle camera movement, subject action, and environmental shifts. Being specific about the direction and speed of the motion typically yields the most consistent results.

Navigating The Computational Processing And Final Review Stages

The system then initiates the processing phase, which generally takes approximately five minutes. During this time, the AI renders a five-second MP4 video file. Once the status indicates completion, the user can review the footage and download it for sharing or further integration into larger projects.

Technical Considerations For Optimizing Output Quality And Realism

Achieving the highest level of realism often involves experimenting with different prompt structures and camera controls. In my testing, subtle movements often feel more authentic than extreme transformations. Users should be aware that highly complex textures may require multiple attempts to achieve perfect temporal stability.

Comparative Evaluation Of Professional Generative Video Performance Metrics

To better understand the differences between various generation tiers, it is helpful to look at the specific attributes that define a successful conversion. The table below outlines the primary factors that influence the final video quality.

| Performance Metric | Standard Processing Level | Professional Output Level |

| Temporal Stability | Moderate Frame Consistency | High-Fidelity Motion Tracking |

| Resolution Standards | 720p Definition | 1080p Enhanced Quality |

| Generation Time | 10 to 15 Minutes | Under 5 Minutes |

| Motion Duration | 3 to 4 Seconds | 5 to 10 Seconds |

| Asset Security | Public Queue Storage | Encrypted Private Processing |

The Evolving Landscape Of Personal Heritage And Digital Archives

The long-term impact of this technology on how we preserve our history is profound. As we move away from static galleries and toward dynamic libraries, the way we relate to our own memories will become more visceral and immediate. For archivists and family historians, these tools provide a way to bridge the gap between generations, making the past feel like a living part of the present.

In my experience, the most successful projects are those that focus on the emotional core of the image. Whether it is the wind blowing through a child’s hair or the flickering light of a candle in an old room, these small details are what make a video feel real. As the models continue to improve, we can expect even greater levels of detail and longer generation times, further expanding the horizons of what we can achieve with a single photograph and a clear creative vision.

Shaker Hammam

The TechePeak editorial team shares the latest tech news, reviews, comparisons, and online deals, along with business, entertainment, and finance news. We help readers stay updated with easy to understand content and timely information. Contact us: Techepeak@wesanti.com

More Posts